Optimizing Player Tracking: Innovative Solutions for Smile in the Light

Body Tracking for Smile in the light |

Computer vision | OpenCV | Projection mapping

Concept

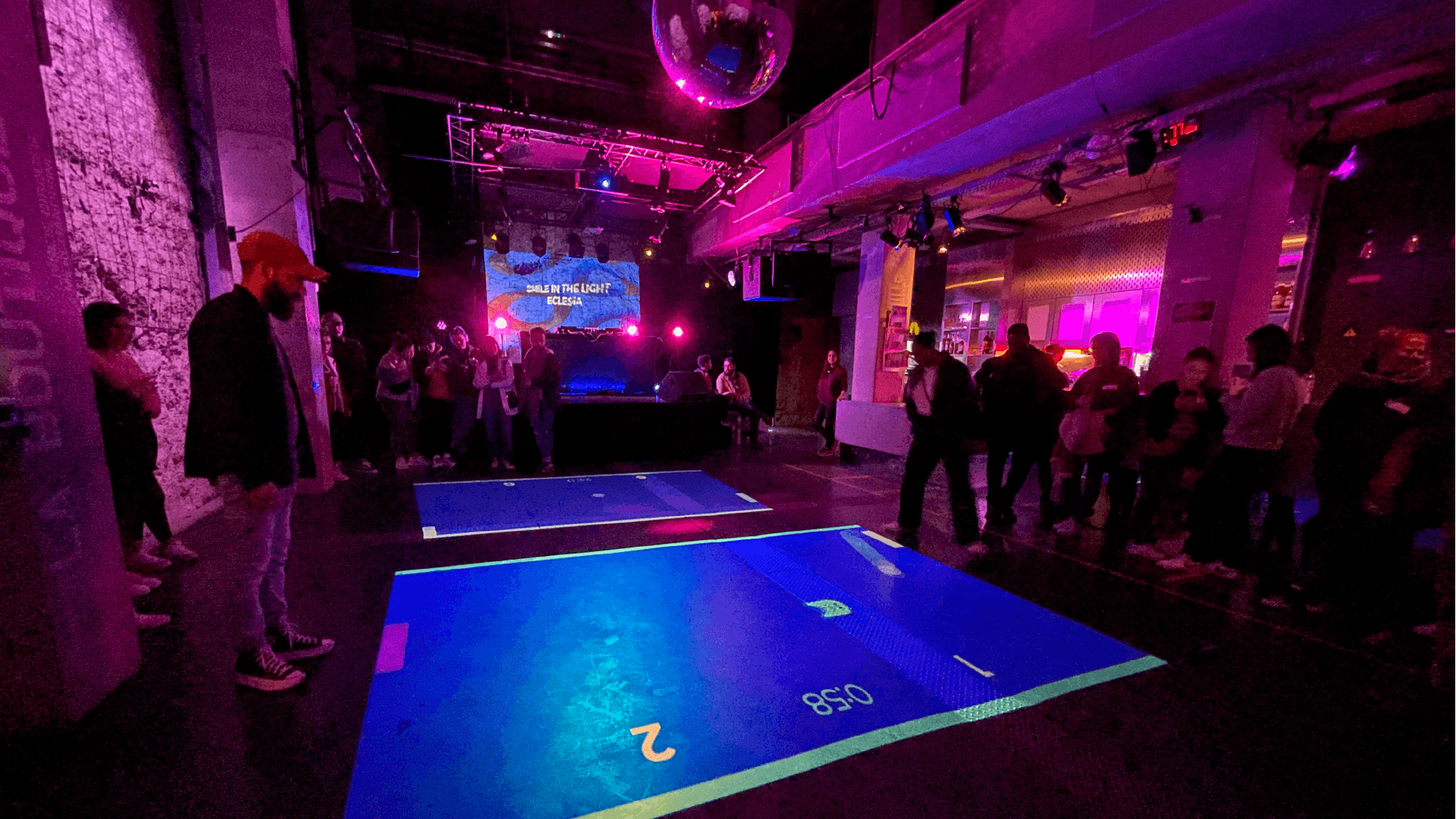

Smile in the Light is a system of interactive and collaborative games for waiting times. They use games to create moments of social cohesion and conviviality. This start-up called on TorrusVR to help them solve the technical problems of tracking people’s positions and calibrating tracking with video projectors.

Project

Smile in the Light had already produced its game, but needed to improve the tracking of player positions. In particular, there were three issues to resolve: Being able to use at least 2 cameras on the same PC Keeping latency as low as possible Being able to track up to 4 players simultaneously One of the conditions was to use Intel Realsense depth cameras. Generally speaking, we prefer their competitor, Kinect Azure, because they already incorporate body tracking, without the need for third-party plug-ins like Intel does now. The real problem is that these famous third-party plugins don’t support having more than one camera on the PC, which seems a pretty incredible limitation. Since the client only needs the position of the players, we decided to write our own custom point cloud analysis algorithm.

Tracking algorithms

Projector calibration

Cameras and projectors are never perfectly aligned. So you need to be able to calibrate the cameras with the projector, so that when a camera sees a person or a point, you know ‘where’ on the projector screen that person is. This is crucial in order to make sense of the position tracking. Fortunately, we have developed an algorithm that calculates this calibration in a fraction of a second. All the cameras have to do is see the projector image, and in just a few frames they know exactly where they are in relation to the projection screen. It’s a bit like our magic formula, so we won’t dwell on the method any further…

Point cloud analysis

As we’ve seen from our work on projects using depth cameras (particularly the Kinect), the ‘body tracking’ part is often extremely costly in terms of GPU and creates latency. What’s more, we don’t always need to know the position of every member of the player’s body, but often only their position on the ground. In general, we therefore analyse the point cloud directly on camera output. The first step is often to capture a ‘baseline’ of the room, which we use to detect any differences between what is being scanned and this baseline. These differences are then fed into a ‘blob’ detection algorithm. We look for all the clusters of points that are close together. Finally, the last stage is the tracking itself. We keep a history of the ‘blobs’ in order to associate new positions with old ‘blobs’, to detect if a new player arrives or if a player leaves. For this project, the entire algorithm is written in C#, so that the client can get a Unity package that can be directly integrated into the game.

Conclusion

Obviously, all these algorithms are not so simple to put in place, and it’s our experience in the field of tracking and computer vision that enables us to quickly deliver a project to a customer, where developers who don’t specialise in this area would have had to spend a lot more time on it. We’ve developed an in-house framework that enables us to quickly create tracking solutions for interactive walls, tracking people, faces and hands to make your experiences more interactive!

Here our client was delighted with the result, the project was delivered and required no modification.

Do you have a project or need information?

Don't hesitate to contact us !

![]() contact@torrusvr.com

contact@torrusvr.com

![]() Bordeaux / Paris (FR)

Bordeaux / Paris (FR)

Development of Immersives Technologies

Site map

Home

Services

Portfolio

Action Game

Immersicase

Blog

Legal Mention

Immersives Technologies

Virtual Reality (VR)

Augmented Reality (RA)

Metaverse

Computer Vision

Projection Mapping

Video Games

Contact

contact@torrusvr.com